The rankings race comes for the lab

For years, public debate on South Korean campuses has centered on issues Americans would immediately recognize: tuition costs, student debt, admissions pressure and the future of higher education in a shrinking youth population. This spring, though, another issue has surged to the forefront — one that sounds more like a scandal from the world of elite sports than academia. In South Korea, professors and researchers are increasingly using the phrase “academic mercenaries” to describe a growing market of outside services that can help produce, polish or even reshape scholarly papers for a fee.

The term covers a broad range of practices. At one end are relatively ordinary services that exist in universities around the world, such as English-language editing or data visualization support. At the other end are ethically far more troubling arrangements: outsourced research design, statistical analysis tailored to produce favorable results, ghostwriting and, in some cases, allegations involving improper authorship — adding or deleting names on papers for career advantage rather than genuine scholarly contribution.

What has made the issue so combustible in South Korea is not simply the existence of these services. It is the feeling among many faculty members that the pressure to climb global university rankings has turned them from a supplementary convenience into part of a distorted academic ecosystem. In other words, the concern is not only about individual misconduct. It is about a system that increasingly rewards visible output — publication counts, citation metrics, international journal placements — over slower, harder-to-measure scholarship.

That complaint will sound familiar to many Americans who have watched colleges chase U.S. News rankings, SAT averages or flashy construction projects. But in South Korea, the pressure is especially intense because rankings can influence not just prestige, but funding, student recruitment, faculty hiring and, for some institutions, long-term survival. In a hypercompetitive education culture already famous for its high-stakes college entrance exam, known as the CSAT or suneung, the logic of constant measurement has spread well beyond undergraduate admissions and deep into the research enterprise itself.

The result, critics say, is a paradox. South Korea is one of the world’s most research-intensive economies, spending more than 4% of its gross domestic product on research and development — a level that exceeds that of many wealthy nations. Yet some scholars argue that the people best positioned to thrive are no longer necessarily those doing the most careful or original work, but those most skilled at navigating a metric-driven system.

How global rankings shape behavior on campus

University rankings were once marketed as a rough consumer guide, a way for students, families and policymakers to compare institutions. In practice, they have become something much more powerful. Global rankings such as QS World University Rankings and Times Higher Education rely heavily on factors tied to research visibility, citations, academic reputation and international collaboration. While each system uses its own formula, the underlying message to university administrators is clear: produce more research that is noticed, cited and internationally legible.

That logic filters quickly down the chain. Presidents and provosts want their institutions to rise in the rankings. Colleges and departments are then pressed to improve measurable performance. Individual professors face promotion, tenure-equivalent review or contract renewal systems that often place significant weight on the number of papers published in high-impact journals, the amount of grant money won and whether a faculty member is listed as a corresponding author or part of an international collaboration.

On paper, quantitative evaluation can look objective. It appears cleaner than subjective judgments about intellectual merit. But scholars in South Korea, as in the United States, say those metrics often flatten important differences across disciplines. A medical researcher participating in large collaborative studies may publish multiple papers a year. A historian or sociologist may spend several years building an archive, conducting interviews or writing a major single-author book. When institutions rely too heavily on standardized metrics, the incentive structure tilts toward speed, volume and visibility.

South Korea’s demographic crisis has intensified that pressure. As the number of college-age students declines, universities — especially those outside Seoul and other major metropolitan areas — are competing fiercely to attract applicants and secure government support. A higher ranking can help reassure families, win partnerships and burnish a school’s image. In that environment, administrators may feel they cannot afford to ignore any lever that improves “performance.”

That is how an indicator meant to describe academic quality can begin to govern it. And once rankings become a de facto management tool, they also create strong incentives for faculty to seek shortcuts, gray-area assistance or ethically dubious help if it means keeping pace with institutional expectations.

What counts as help, and what crosses the line?

One reason the controversy has become so heated is that the boundary between legitimate support and misconduct is not always obvious. In American universities, too, scholars routinely seek help from editors, statisticians, graphic designers and research assistants. Many journals allow language editing. Some funding agencies encourage interdisciplinary collaboration that naturally includes outside technical expertise. The problem begins when assistance changes from support into substitution — when the outside party is no longer helping communicate or analyze the research, but effectively producing the work or shaping the conclusions.

Authorship is one of the sharpest flashpoints. International standards such as those promoted by the International Committee of Medical Journal Editors, or ICMJE, generally require meaningful contribution to a study’s design or interpretation, participation in drafting or substantially revising the manuscript, approval of the final version and accountability for the work. On paper, those rules are clear. In practice, scholars around the world know that authorship can become a form of currency. A senior academic’s name may add prestige. A junior researcher may be left off a paper despite doing significant labor. A collaborator may be added to strengthen a résumé, cement a relationship or improve prospects for future funding.

In South Korea, critics say the rankings and evaluation system have increased the temptation to treat authorship as a negotiable asset rather than a record of intellectual contribution. If a paper counts toward a professor’s annual review and a university’s overall output, there is pressure to maximize the number of papers and the strategic value of the names attached to them. That can make improper authorship not just an ethical lapse, but a structurally rewarded one.

Statistics and writing support present another murky area. Consulting a statistician is not inherently suspicious; in many fields, it is good practice. But if the goal becomes finding a method that will produce a statistically significant result rather than honestly testing a hypothesis, the problem shifts from technical assistance to manipulation. Likewise, there is a difference between asking a native English speaker to smooth awkward phrasing and paying a service to reconstruct the paper’s logic, argument and framing so extensively that the named authors are no longer the true authors in any meaningful sense.

That blurring of responsibilities matters because modern scholarship depends on trust. Journal editors trust that the listed authors stand behind the work. Universities trust that their faculty’s records reflect real achievement. Students trust that the system rewards merit. Once those assumptions start to fray, the damage spreads far beyond a single article.

Why this is more than a story about a few bad actors

Many South Korean professors objecting to the trend say the public should resist reducing the controversy to personal morality tales. To be sure, some conduct is plainly unethical. But the anger on campuses is also directed at the structure surrounding those choices. In the view of many faculty members, the system increasingly punishes careful scholarship and rewards tactical productivity.

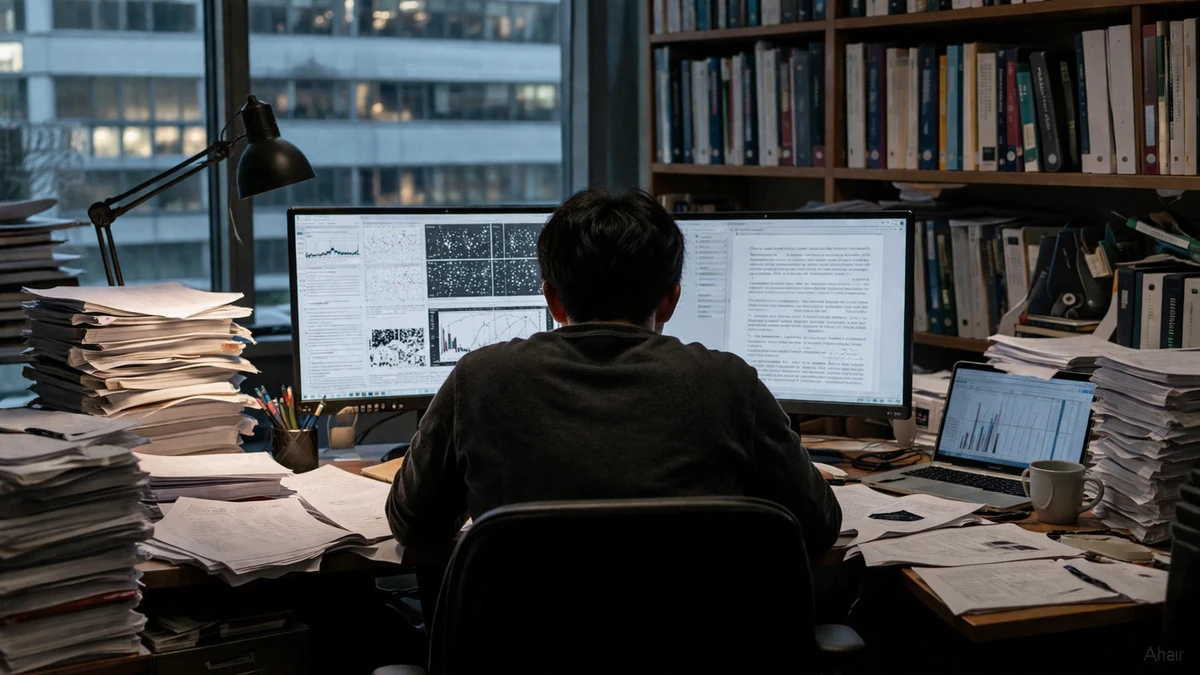

Consider the difference between two researchers. One spends several years gathering original data, conducting fieldwork and writing a deeply argued paper that may have lasting value but produces little immediate numerical output. Another assembles a faster stream of publishable papers by relying heavily on outside assistance for editing, analysis or manuscript preparation. If promotion committees and administrators are looking first at counts, impact factors and citation measures, the second researcher may appear more successful, even if the first is doing more intellectually significant work.

That dynamic is intensified by workload. Professors in South Korea, especially at regional or mid-sized institutions, often juggle teaching, advising, department administration, committee work and grant management alongside research. American faculty members would recognize the complaint. But in a system where international publication is increasingly mandatory, time becomes one of the most precious and scarce resources. The less time scholars have to write, revise or learn the conventions of English-language academic publishing, the greater the incentive to turn to outside help.

The pressure of globalization compounds the problem. Publishing in English-language journals is treated as a baseline expectation in many disciplines. Yet research ability and English fluency are not the same thing. A scientist may produce excellent work and still struggle to write for an international journal audience. A social scientist may understand local realities deeply but feel disadvantaged in framing arguments for global publication markets. When universities demand internationalization without building adequate training and support, they create a vacuum that private services are eager to fill.

That is one reason the phrase “academic mercenary” has resonated so strongly. It captures the sense that scholarship itself is being sliced into purchasable components — idea development, data work, manuscript polishing, authorship strategy — that can be outsourced by those with enough money, institutional backing or desperation. Critics fear that once that market becomes normalized, the values that sustain academic life begin to erode from the inside.

The young researchers paying the highest price

If there is a group likely to absorb the worst consequences, it is graduate students, postdoctoral researchers and other early-career academics. In both South Korea and the United States, these scholars often do much of the day-to-day labor that makes research possible: running experiments, cleaning data, drafting sections of papers, preparing conference presentations and helping manage lab administration. They are also the people with the least power to challenge questionable practices.

In a lab or research group where publication output directly affects a professor’s evaluation, grant prospects and institutional standing, students can become the front line of performance pressure. They may be expected to produce results quickly, accept ambiguous authorship arrangements or tolerate outside intervention in the writing and analysis process without fully understanding how those choices could affect their own reputations. If credit is allocated unfairly, junior researchers lose twice — first in recognition, then in career opportunity.

The consequences can extend well beyond one paper. Postdocs and contingent faculty frequently work on short-term contracts and must assemble publication records quickly to remain competitive. If some peers are benefiting from ghostwriting, questionable authorship swaps or aggressive paper-production services, those trying to play by stricter rules may look unproductive by comparison. Over time, that can drive talented researchers out of academia altogether.

For South Korea, which has long invested heavily in education as a national growth strategy, that is no small matter. The country’s remarkable economic transformation over the past half-century — from war-torn poverty to a global leader in semiconductors, consumer electronics, biotechnology and culture — has depended in large part on human capital. If young scholars come to believe that academic advancement depends less on rigor than on gaming metrics or buying assistance, the long-term damage could be profound.

This generational dimension also helps explain why the controversy carries emotional force. It is not simply about preserving the dignity of professors. It is about whether the next cohort of scholars will inherit a system in which trust, mentorship and intellectual labor still matter.

Retractions are only the visible part of the problem

One reason research integrity debates often gain traction only after a scandal is that the most serious cases leave a public paper trail. Articles are retracted. Universities open investigations. Funding agencies review grants. Headlines follow. But experts in publication ethics often note that retractions are a lagging indicator, not a complete census of wrongdoing.

Globally, the number of retracted papers has risen sharply over the past decade, driven by a mix of better detection, expanding research output and, in some fields, organized fraud. International databases now track thousands of retractions each year, a figure that would have seemed astonishing a generation ago. The increase does not mean every field is collapsing into misconduct, but it does suggest that the pressure to publish can create vulnerabilities that bad actors exploit.

South Korea has seen its share of research ethics controversies before, and the country’s public is unusually sensitive to them because academic reputation carries such social and institutional weight. Retractions can have consequences that go far beyond an individual paper. A university department may suffer reputational damage. Grant money may be scrutinized or clawed back. Students associated with a tainted lab may find their own prospects harmed by guilt through association. In a system where rankings and public image matter deeply, a single high-profile case can ricochet through an institution.

Still, retractions represent only the cases that surface. The larger concern raised by the current controversy is normalization of gray-zone behavior that may never trigger a formal investigation but still undermines scholarly credibility. A paper that survives peer review is not necessarily a paper produced under fair or transparent conditions. If authorship is inflated, if key analytical decisions were effectively purchased, or if writing support crossed into ghostwriting without disclosure, the paper may never be retracted and yet still distort careers and incentives.

That is why many scholars argue the conversation should not start and end with punishment. It should also ask how evaluation systems create demand for exactly the kind of services universities later claim to condemn.

What reform could look like

If South Korea wants to address the “academic mercenary” problem in a durable way, experts say it will need more than periodic crackdowns. The core challenge is structural: aligning institutional incentives with the values universities say they support.

One obvious starting point is greater transparency in authorship and contribution. Many journals already require contributor statements specifying who designed the study, gathered data, performed analysis and wrote the manuscript. Universities and funding agencies could reinforce those norms by making detailed contribution disclosure standard in promotion reviews and grant reporting. That would not eliminate misconduct, but it would make inflated or symbolic authorship harder to hide.

Disclosure rules for outside assistance could also be strengthened. There is nothing inherently improper about language editing or statistical consultation. But when those services are used, journals and institutions could require authors to declare them clearly, just as many publications require disclosure of conflicts of interest or AI-assisted writing tools. Transparency would help distinguish legitimate support from undisclosed substitution.

Just as important, universities could reconsider how they evaluate faculty performance. That does not mean abandoning metrics altogether. Numbers can be useful, especially in large systems that need common criteria. But overreliance on publication counts and citation scores creates exactly the incentives now under scrutiny. More weight could be given to research quality, originality, data-sharing practices, mentoring, field-specific norms and longer-term scholarly contributions rather than raw output alone.

There is also a practical support issue. If institutions expect faculty to publish in English-language international journals, they can invest in ethical, in-house support — writing centers for scholars, editorial assistance, research design consultation and training in publication ethics. In the United States, many major universities offer such support because they recognize that global academic communication requires infrastructure, not just pressure. South Korean universities may need to expand those resources if they want to reduce dependence on private third-party markets.

Finally, reformers argue that graduate students and junior researchers need stronger protection. Clear authorship rules, confidential reporting systems, mentorship training and safeguards against retaliation could help restore confidence among those most vulnerable to abuse. If younger scholars believe raising concerns will end their careers, misconduct will remain hidden and cynicism will deepen.

A warning sign for higher education far beyond Korea

It would be easy for outsiders to treat this as a Korea-specific story, shaped by the country’s famously intense educational culture. That would be a mistake. The particulars may be local, but the underlying pressures are global. Universities everywhere are being measured more aggressively, marketed more competitively and asked to prove value through dashboards, rankings and performance indicators. Whenever careers hinge on visible metrics, industries emerge to help people optimize those metrics.

Americans have seen versions of this in college admissions consulting, standardized test prep and the larger ecosystem of résumé engineering that grows up around high-pressure institutions. Academic publishing is no exception. What South Korea’s current debate reveals, in unusually stark form, is what happens when those pressures move from the periphery to the center of research culture.

The broader lesson is not that rankings are meaningless or that all outside support is suspect. Rather, it is that any system built heavily around measurable outputs will invite a market designed to manufacture or enhance those outputs. If universities want credible research, they cannot simply demand ever more productivity and then act surprised when scholars seek industrial-scale help meeting the demand.

For South Korea, the stakes are especially high. The country has spent decades building universities capable of competing on a global stage. It has succeeded in many respects, producing world-class research and institutions with growing international reach. But prestige built on metrics alone is fragile. In scholarship, as in journalism, public trust is hard won and easily squandered.

The current uproar over “academic mercenaries” is therefore about more than a catchy phrase or the latest campus scandal. It is a warning that a system obsessed with numbers can lose sight of what those numbers were supposed to represent in the first place: knowledge produced honestly, credited fairly and strong enough to stand on its own.

0 Comments